April 28, 2026

© Gate of AI

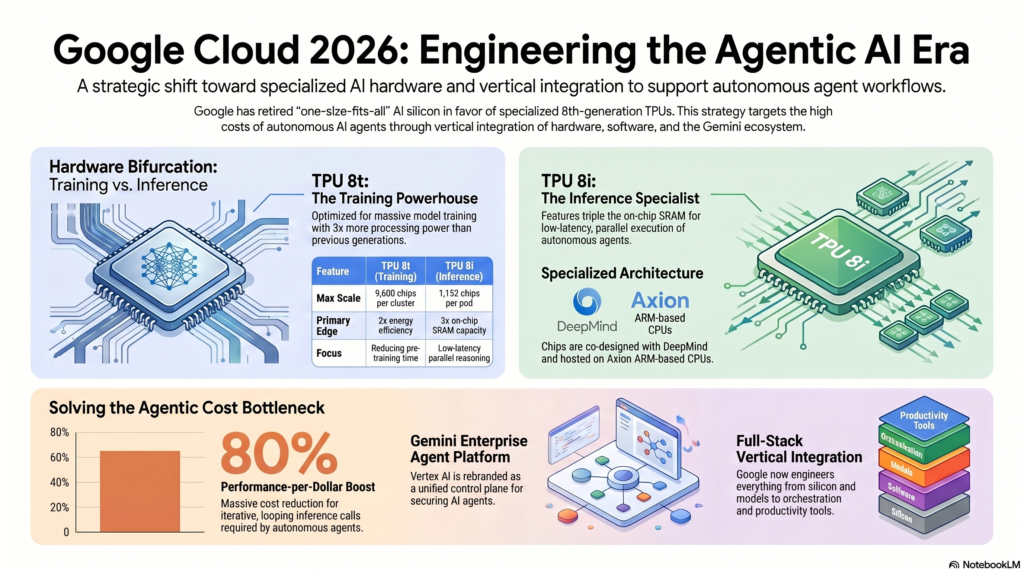

Google has officially retired the “one-size-fits-all” AI chip. With the launch of the 8th-generation TPU 8t and 8i, alongside a massive rebranding of Vertex AI, Google is betting that extreme vertical integration is the only way to beat OpenAI and NVIDIA.

Executive Summary

- Hardware Bifurcation: Google introduced two distinct 8th-gen chips: the TPU 8t (optimized for massive model training) and the TPU 8i (optimized for low-latency agent inference).

- The Vertex Rebrand: Vertex AI has been officially consolidated and rebranded as the Gemini Enterprise Agent Platform, shifting focus from passive ML to active enterprise automation.

- Economic Scale: Google claims an 80% improvement in performance-per-dollar for inference workloads, targeting the hyper-expensive demands of looping autonomous agents.

What Happened

During the April 22 keynote at Cloud Next 2026 in Las Vegas, Google Cloud CEO Thomas Kurian laid out a new foundational architecture for the company’s AI strategy. Acknowledging that the industry has fully transitioned into the “Agentic Era,” Google officially shifted away from building general-purpose silicon.

Historically, chips were expected to handle both the training of massive trillion-parameter models and the high-velocity inference required to serve them. Google has boldly bet that this assumption is dead. By introducing two specialized chips—the TPU 8t and TPU 8i—co-designed with Google DeepMind and hosted on Axion ARM-based CPUs, Google is aligning its data center hardware precisely with the unique computational demands of autonomous AI agents.

The Architecture Breakdown

| Hardware | Specs & Capabilities | Strategic Purpose |

|---|---|---|

| TPU 8t (Training) | Scales up to 9,600 chips via inter-chip interconnects. 3x processing power and 2x energy efficiency vs. previous generation. | Built to drastically reduce pre-training time for massive frontier models. |

| TPU 8i (Inference) | 1,152 chips per pod. Features triple the on-chip SRAM capacity of the predecessor (Ironwood). | Engineered specifically for low-latency, parallel execution of millions of autonomous agents. |

Why This Matters: The Agentic Cost Bottleneck

The shift from generative chatbots to agentic workflows has broken traditional cloud economics. A chatbot requires one inference call per user prompt. An AI agent might require hundreds of iterative, looping inference calls to execute a single workflow (e.g., browsing a database, verifying code, self-correcting errors).

If companies are billed at standard inference rates for agentic workflows, enterprise deployment becomes financially unsustainable. Google’s TPU 8i solves this. By heavily increasing the on-chip SRAM, the 8i can hold vast amounts of agent context directly in high-speed memory, delivering a claimed 80% improvement in performance per dollar. This allows enterprises to deploy swarm-scale reasoning without bankrupting their IT budgets.

The Software Layer: Farewell, Vertex AI

Hardware is useless without an orchestration layer. Recognizing this, Google has officially retired the Vertex AI branding, consolidating its sprawling machine learning stack into the unified Gemini Enterprise Agent Platform.

This is not just a name change; it is a signal to the market. Google is no longer selling “machine learning tools” to data scientists. They are selling a full-stack, end-to-end control plane for deploying and securing AI agents natively alongside Google Workspace and third-party tools like Salesforce and Workday.

Our Take

Google’s announcements at Cloud Next 2026 are a direct declaration of full-stack warfare against both OpenAI and NVIDIA. While NVIDIA remains the undisputed king of raw GPUs, Google’s pitch is entirely about vertical integration.

Google is betting that enterprise CTOs don’t want to piecemeal their infrastructure. They want a single provider where the silicon (TPU 8i), the foundational model (Gemini 3.x), the orchestration environment (Gemini Enterprise Agent Platform), and the productivity suite (Workspace) are all engineered by the same company to run seamlessly together. It is an incredibly ambitious bet, but if the 80% cost reduction holds true in production, Google may have just solved the industry’s biggest scaling problem.