April 30, 2026

© Gate of AI

As AI coding agents like Cursor and AutoGen take over enterprise development in Q2 2026, a new crisis is emerging for CTOs: the exponential, unpredictable token costs of infinite LLM reasoning loops. Welcome to the era of “Token Anxiety.”

At a Glance

| 📉 Core Issue | Exponential Token Consumption by Autonomous Agents |

| 🎯 Affected Audience | CTOs, MLOps Engineers, and Software Development Teams |

| 🛠️ Market Response | Rise of “Token Dashboards”, KV-Caching, and Lightweight Routing Models |

The Hidden Cost of Autonomy

For the past two years, the AI industry has been obsessed with autonomy. We successfully transitioned from passive chatbots that require step-by-step human prompting to active, agentic systems that can browse the web, write code, and execute software tasks entirely in the background.

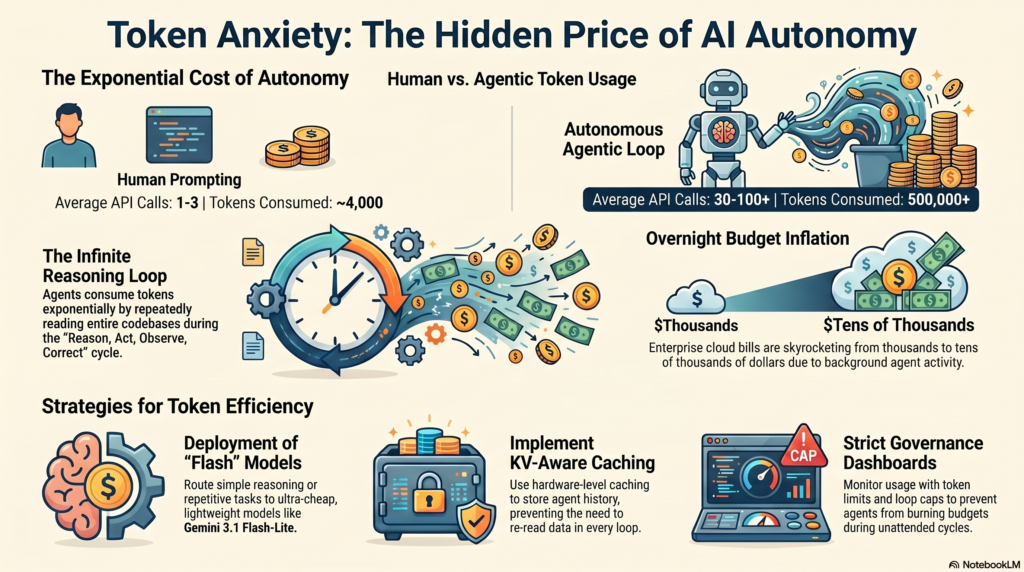

But this autonomy has revealed a massive financial blind spot. In April 2026, the leading buzzword echoing through Silicon Valley boardrooms is “Token Anxiety.” Because an autonomous agent operates in a continuous loop—Reason, Act, Observe, and Correct—it consumes context tokens exponentially. An agent assigned a seemingly simple task to “refactor the authentication module” might hit a bug, self-correct, and re-read a 50,000-token codebase twenty times in five minutes.

The Math Behind the Panic

To understand why enterprises are panicking, look at the fundamental math of a coding agent using a high-end reasoning model (like Claude 4.7 or GPT-5.5):

| Workflow Type | Average API Calls | Tokens Consumed per Task |

| Standard Human Prompting | 1 to 3 | ~4,000 Tokens |

| Autonomous Agentic Loop | 30 to 100+ | 500,000+ Tokens |

When a team of 50 developers deploys background agents to handle their unit testing and refactoring, cloud bills are skyrocketing from thousands to tens of thousands of dollars almost overnight. The friction is no longer about capability; it is strictly about cost control.

How the Industry is Responding

Tech giants and MLOps platforms are racing to build infrastructure that mitigates Token Anxiety. We are seeing three massive shifts this month:

- The Rise of “Flash” Models: Providers are releasing heavily optimized, ultra-cheap models specifically for high-volume agent tasks. Google’s recent launch of Gemini 3.1 Flash-Lite is designed precisely to handle the repetitive reasoning loops of agents at a fraction of the cost.

- Hardware Caching (NVIDIA Dynamo): As we recently reviewed, platforms like NVIDIA Dynamo are implementing KV-Aware caching, which stores an agent’s history in GPU memory so it doesn’t have to pay to re-read the entire codebase on every single loop.

- Token Leaderboards: Internal IT departments are adopting strict “Token Dashboards,” gamifying token limits and throttling background agents to prevent junior developers from accidentally burning the monthly API budget over the weekend.

Gate of AI Verdict

Token Anxiety is the inevitable growing pain of the Agentic Era. The companies that win in 2026 will not be the ones that simply deploy the smartest AI models; they will be the ones that engineer the most token-efficient agent architectures. Developers must pivot from writing “perfect prompts” to designing efficient “agent state machines” that know exactly when to stop thinking and ask a human for help.

💡 How to Adapt

- Implement strict loop limits (e.g., max 10 steps per agent).

- Route simple data-checking tasks to cheaper “Flash” models.

- Utilize prompt caching architectures natively.